There is been plenty of activity and excitement recently concerning Microsoft’s aggressive move to obtain a large portion of Oracle’s market share. There are always pros and cons to any situation, so I’m going to illustrate both, although as a Microsoft partner, we see more pros than cons.

We’re going to dive into five major questions that anyone considering migrating needs to answer specific to their own organizational culture and business needs.

- WHY move from Oracle to SQL Server 2016?

- How will it empower our business?

- What are the business, financial and migration impacts for us?

- How do we assess and mitigate risk when considering migration?

- What assistance is available to us to make this move?

So the biggest and obviously most important question here is, WHY should a company move away from Oracle to SQL Server 2016?

The fact is that Oracle requires a significant investment. Anyone that has adopted this technology also realizes the size of the footprint throughout their technology stack it probably has. Nobody purchases Oracle licensing for small, or even medium businesses. This is and has always been a very large scale solution.

Pragmatic Works has completed several conversions from Oracle to SQL Server, and the thing we constantly hear is, “We have a lot of money, as well as processes, tied to this product. Why would we want to change?”

Let’s dive into that, but first let’s cover some Economics 101. I received my undergraduate education in computer science, so when I began my MBA, most of these concepts were new to me. The most important aspect of any technology investment is the Return on Investment, or ROI. EVERY single senior financial is going to complete this analysis and come up with some concrete numbers to justify the investment. This is calculated as:

Gain from Investment – Cost of Investment

Cost of Investment

So if the gain from the investment exceeds the cost of the investment by a decent margin (why we divide by cost again), then it’s considered a solid investment, worth the time and money, and things move forward.

The Cost of Investment can be defined as the physical cost of the SQL Server hardware and software, plus the cost to migrate all objects to SQL Server. The physical cost is a clear, definable cost. The migration cost is highly variable and depends on the current implementation and will be discussed in further depth shortly (hint, Microsoft will help foot the bill).

So let’s dig into the costs we know.

Comparing SQL Server Enterprise’s total price of $27,496 to Oracle’s $346,000 is a HUGE difference. The reason is quite simple. SQL Server 2016 is being labeled as “All Inclusive”, or just a buffet including steak, potatoes, drink, dessert, etc. Oracle sells individual products “a la carte” which would be akin to ordering a steak, then ordering potatoes, then a drink, a dessert, etc.

Let’s look a little closer at those individual costs to make sure they add up to what is truly advertised.

| SQL Server | Oracle Database (All Options not Shown) |

|

| Enterprise Base License (includes 1 year of support) |

$27,496 |

$95,000 |

| Data Available | Included | $11,500 (Active Data Guard) (times 2 for 2 nodes) $23,000 Total |

| Performance and Scale | Included | $11,500 (Advanced Compression) $23,000 (Database In-Memory) $11,500 (Partitioning) $11,500 (Real Application Testing) $7,500 (Diagnostics Pack) $5,000 (Tuning Pack) $140,000 Total |

| Enterprise Security | Included Free Download |

$15,000 (Advanced Security) $11,500 (Label Security) $35,000 Total |

| Any Data, Built In | Included | $17,500 (Spatial & Graph)

$35,000 Total |

Total Cost |

$27,496 |

$346,000 |

What does this all mean? Going back to Economics 101….

In economics and business decision-making, a sunk cost is a cost that has already been incurred and cannot be recovered. Sunk costs (also known as retrospective costs) are sometimes contrasted with prospective costs, which are future costs that may be incurred or changed if an action is taken.

For a great example on sunk costs, check out the U.S. Defense’s failed F-35 program. It’s another way of saying, “Throwing good money after bad.”

The point here is that it doesn’t matter what has already been spent on Oracle licensing and infrastructure, it’s whether the technology satisfies the business in every aspect in the future, including financial, business, technical, etc.

In microeconomics, economies of scale are the cost advantages obtained due to size, output, or scale of operation, with cost per unit of output generally decreasing with increasing scale as fixed costs are spread out over more units of output. So it’s the cost of the product decreasing as the quantity increases.

Economies of scope is an economic theory stating that the average total cost of production decreases as a result of increasing the number of different goods produced. The concept here is that Microsoft is not investing all of their resources in just ONE product, they have the same resources contributing to several products, thus reducing the overall cost for the collective unit. This all results in the following:

- Extreme flexibility in product design and product mix

- Rapid responses to changes in market demand, product design and mix, output rates, and equipment scheduling

- Greater control, accuracy, and repeatability of processes

- Reduced costs from less waste and lower training and changeover costs

- More predictability (e.g., maintenance costs)

- Less risk

- ….and more

The Core of the Everything is, Am I Going to Get my Gain from Investment?

Yes, you will, by empowering the business through data.

Gain from the Investment by Providing for the Decision Makers

- Robust Data Infrastructure

- Powerful Integration

- Analytics Tools

- Free and Simple Access to Information

- Intuitive Interface

So There are Lots of Goodies, but Does the Technology Perform?

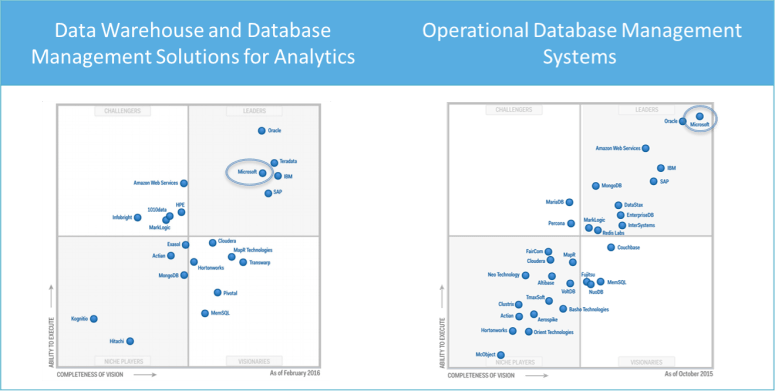

Yes, it does! But don’t take it from me, let’s check out what the leading authority on technology assessment, and a completely non-biased company, Gartner Inc., says.

Breaking it down by subject, here’s what they say about Advanced Analytics Platforms and BI:

How about database performance? Does it perform? Gotcha covered….

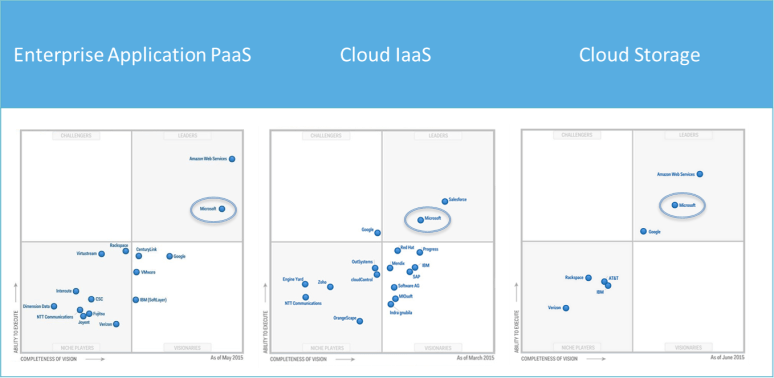

Okay, but at SOME point, you may want to move the cloud. Gotcha covered….

- Ask 10 Oracle DBAs the top reason to use Oracle and 9 times, you’ll hear “It has better security!” Wrong….

- The other 1 may say “It performs better” Nope….

- “What about this whole Mobile BI movement I hear so much about?” Gotcha covered….

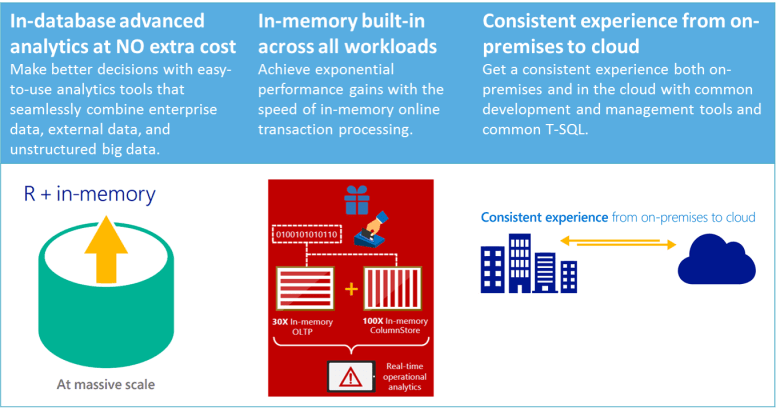

- Want to use R in-database?

- In-memory across workloads?

- Consistent experience from on-premise to cloud?

But wait, there’s more!!

Built on SQL Server 2016, SQL Server on Linux gives you the power to build and deploy intelligent applications on a single data management and business analytics platform. The preview is currently available to play around with, but the production version is slated for a mid-2017 release.

Migration Process

This process generally consists of the following 5 phases, not necessarily executed in order, sometimes reiterated , etc.

Analyze and Strategize

- Project Road map

- Validate ROI

Architect and Validate

- Complete High Level Design

- Justify Investment

- Assess Migration Technical Issues

Complete Detailed Design

- Implementation Plan

- Create POC

Implement

- Complete Implementation

- Testing & UAT

- Production Cut-over

Manage

- Monitor Results

- Optimize

Sometimes phases affect other phases and require re-work. For example, if we began our detailed design but discover technology inadequacies, or the architecture cannot fully satisfy the business, the Architect and Validate step may need to be re-iterated.

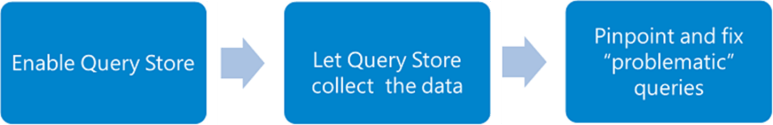

Thankfully, there a couple of very helpful tools that can mitigate risk, as well as speed up the process.

Migration Tools

Microsoft Assessment and Planning (MAP) Toolkit for SQL Server

Using the MAP tool, get a complete network-wide inventory of SQL Server and Oracle instances, edition identification, and hardware assessment.

With MAP Toolkit, utilize the following reports:

- Discovery and Inventory: Database inventory and reporting of Oracle instances on Windows and Linux based servers

- Detailed Reporting: Covers specific SQL Server instances that include component name, version, edition, and more. Includes wide-ranging details of databases and server instances that can be used for consolidation. Oracle schema reporting identifies the size and use of each schema, estimates of the complexity of migration.

- Hardware and platform details: Details for computers that are running heterogeneous database instances, including determining whether the machines are virtual or physical.

At the end of this process, you should have the capacity to do each of the following effectively:

- Scope for Server & Storage Consolidation, Migrations and Upgrade

- Utilize the Existing Infrastructure

- Streamline SQL Server Licensing

- Reduce Administrative Costs

SQL Server Migration Assistant (SSMA) for Oracle

According to Microsoft, use the tool to “quickly convert Oracle database schemas to SQL Server schemas, upload the resulting schemas into SQL Server and migrate data from Oracle to SQL Server.”

According to me personally, use the tool to gather all metadata for all Oracle objects to perform deeper analysis. Utilize Visual Studio for creating and maintaining databases and objects, as well as ETL development for data population. Using formal development tools allows for a more iterative, reproducible, quantifiable and testable implementation.

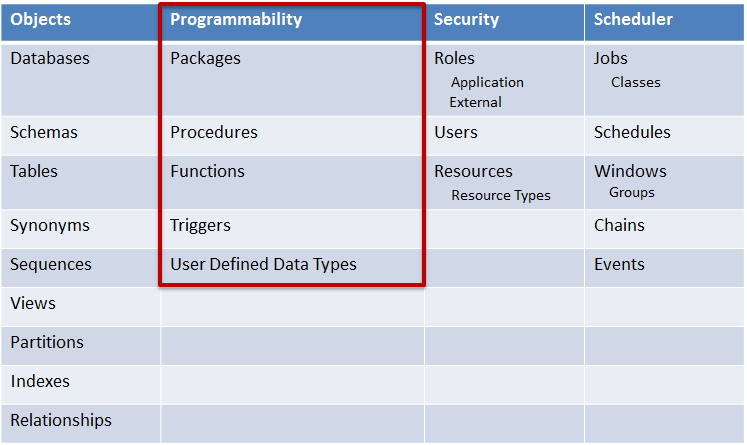

Use SSMA for Oracle to Discover Objects

The first 3 columns can be discovered by SSMA, however all Scheduler items must be manually discovered and assessed.

So we’ve found a list of all objects, now we just complete them all at the same time in a nice linear fashion, right? Wrong!

Which of These Groups has the Highest Risk?

The highest risk item, BY FAR, is the programmability. Creating every other object is a fraction of the time that re-writing programmable items will require. I estimate that 75% or more of the object conversion effort will be converting programmability, and specifically PL/SQL.

This group include Packages, Stored Procedures, Functions and Triggers.

Fortunately, MOST programmable objects perform CRUD (Create, Read, Update and Delete) operations

- If following ANSI SQL standards, platform independent syntax, they should be using COALESCE, CASE, JOIN, CAST, etc.

- The majority of conversion work is when directly converting PL/SQL to T-SQL

Additional business logic embedded in the PL/SQL increases conversion time substantially, if not exponentially.

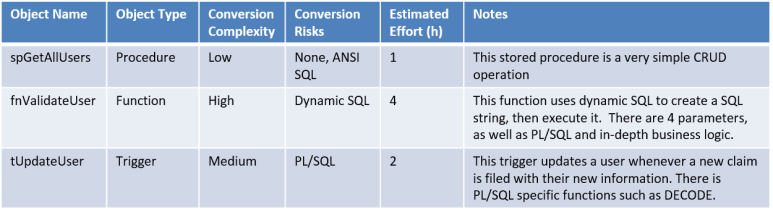

Complete In-Depth Programmability Assessment

Compile and detail a matrix consisting of the following items:

This can also be modified to a working conversion document by adding columns such as ‘Converted to T-SQL’, ‘Unit Tested’, ‘UAT’, ‘Deployed’, notes for each column, etc.

This should be a deliverable that can be taken at the completion of the assessment and handed to any technology solution company (hopefully Pragmatic Works) for completion. The time and cost will have clear and defined boundaries.

Use ONE Tool to Kick off a Changeover Process, or “flip the switch”, on an Instance-by-Instance Basis

I prefer SSIS, because it does SEVERAL things very well (and I’m kinda good at it), AND it’s a Microsoft BI product.

- Execute Script Task (Create Objects)

- Data Flows (Migration of Data)

- Troubleshoot (Using Pragmatic Works’s BI xPress)

- Execution Time (at any almost granularity)

- Error Logging

- Audit Framework

- Troubleshooting

- Notifications for Scheduled Migration Tests

Using SSIS, perform the following tasks programmatically in order (3 steps):

(Following Microsoft Data Warehouse Toolkit Recommendation)

Create (and drop) objects

- Schemas

- Tables

- Synonyms, Sequences, Views

Populate Data

- Enable Identity Insert

- Cast/Convert

- Lookup Whenever Necessary

Create Optimizations/Integrity

- Partitions

- Indexes

- Relationships

- Constraints

Migration Support

So how can we get a little bit of help with all of this?! Who can help us?

Why our buddies at Microsoft can help us of course!

Easier than ever to switch – simplified migration tools, free training for Oracle DBAs, and starter deployment services help you break free of your current platform. Enjoy flexible deployment options for your applications whether maintaining on premise installations or optimize costs and adopt the cloud with Azure, the most trusted cloud in the industry.

….and let’s be honest about all of this, it isn’t free nor cheap.

And they have! Microsoft has put their money where their mouth is and they’re offering to help out with this, not just with tools but financially.

Training and services are only available through June 30, 2016, but take advantage of:

- Free SQL Server licenses (with Software Assurance)

- Free training – SQL Server Essentials for the Oracle DBA

- Subsidized deployment services to kick-start migration

Check out the Offer Datasheet for additional details.

We may or may not have convinced you to pick SQL Server 2016 over Oracle, but you have to admit that it’s a rather compelling argument and AT LEAST needs serious consideration.